By Rodger Morrow, Editor & Publisher, Beaver County Business

Listen to a podcast discussion about this article.

For the past few years, artificial intelligence has been marketed the way miracle tonics once were: bright labels, extravagant promises, and a suspicious aftertaste of snake oil. We’ve been told—repeatedly—that intelligence itself has finally been solved, cracked open like a walnut by models that can write emails, poems, and freshman philosophy papers at an industrial scale.

And to be fair, these machines are impressive conversationalists. They can explain metaphysics, draft zoning ordinances, and politely apologize when they hallucinate a court case that never existed.

But here’s the unsettling possibility: what if all this time we’ve been mistaking eloquence for intelligence?

That question matters a great deal in places like Beaver County, where intelligence has never been defined by how well you talk about work, but by whether the thing you built actually holds together under load, vibration, weather, and time. A circuit breaker doesn’t get extra points for eloquence. It either trips—or it doesn’t.

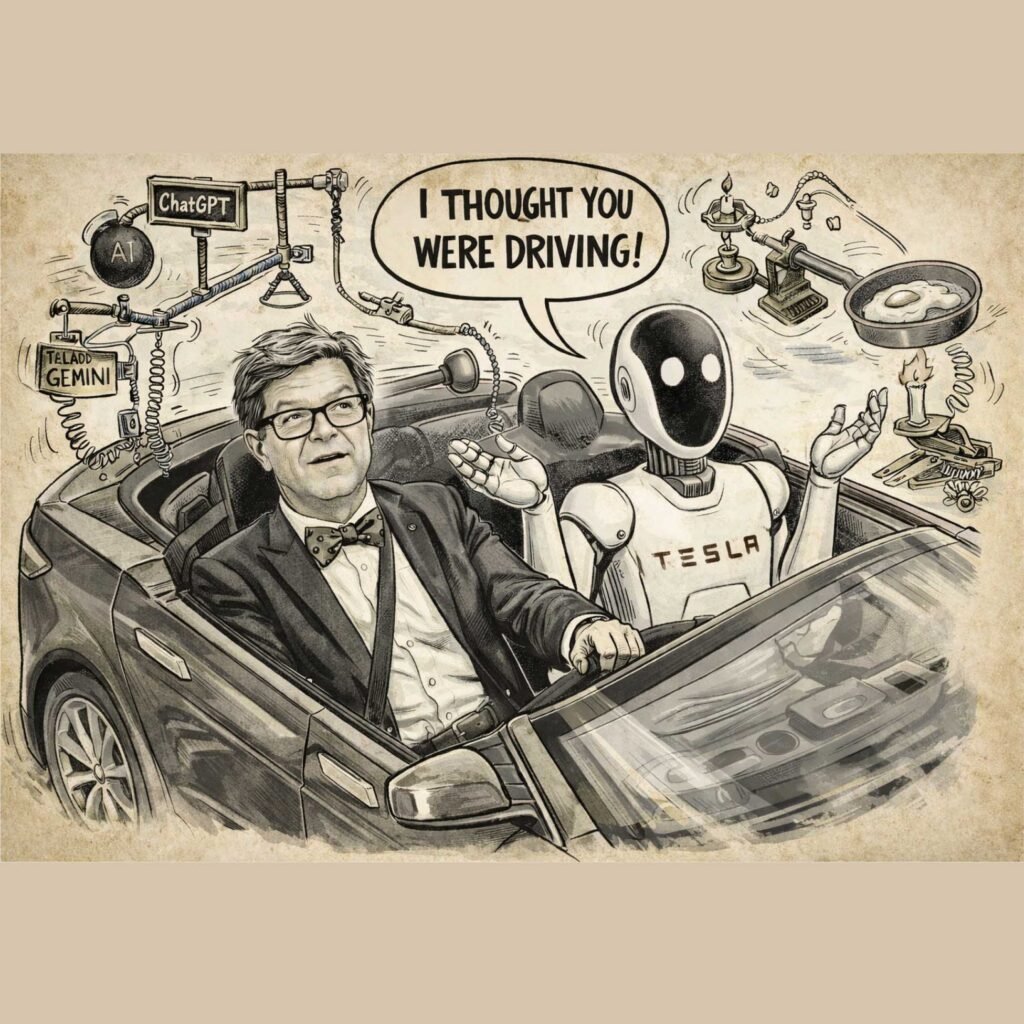

That distinction sits at the heart of a new architecture proposed by Yann LeCun, Meta’s chief AI scientist and one of the godfathers of modern machine learning. His latest work on something called V-JEPA suggests that the last decade of AI progress may have taken a very clever, very lucrative… and possibly very wrong turn.

The Chatbot Illusion

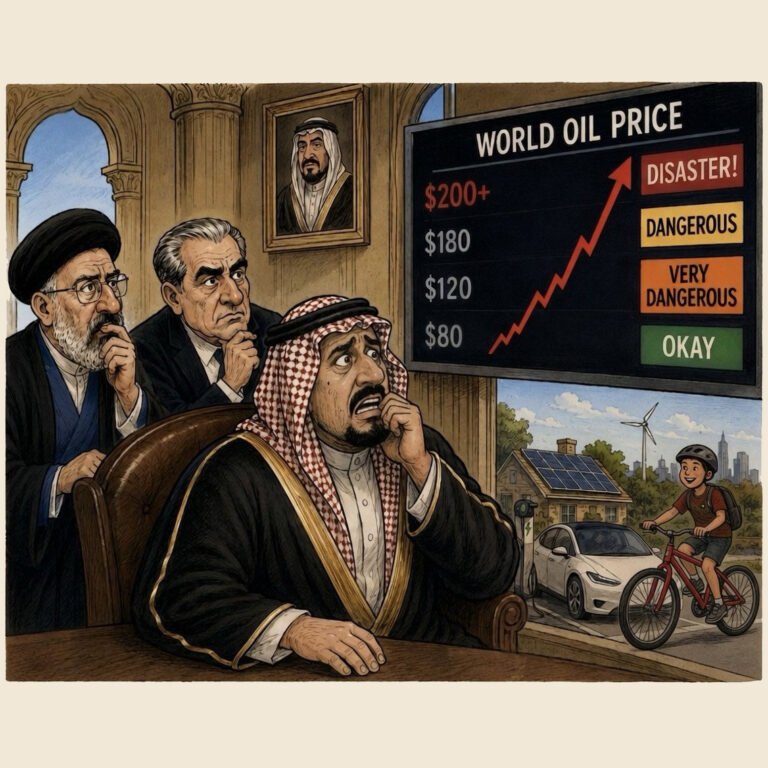

Most of today’s headline-grabbing systems—ChatGPT, Claude, Gemini—are language models. They don’t understand things so much as they predict what word is likely to come next, given the words that came before. Token after token, sentence after sentence, they assemble an illusion of reasoning by being extremely good at autocomplete.

It’s a parlor trick with industrial consequences.

LeCun’s critique is blunt: language is not intelligence. It’s just an output format. A four-year-old child—armed with sticky fingers and an alarming lack of OSHA compliance—has seen more of the real world than any AI has ever read in text. The child knows what happens when a box tips off a table. The model knows how to describe it.

In Beaver County terms, one knows how to run the mill. The other knows how to write the press release.

From Words to Meaning

V-JEPA flips the problem around. Instead of generating words, it predicts meaning. Instead of narrating the world, it tries to model it internally—learning how objects persist, move, collide, and cause other things to happen over time.

This is not poetry. It’s physics.

The model operates in a continuous semantic space, where “the valve failed,” “pressure dropped,” and “the line shut down” are understood as closely related events—even if they’re phrased differently. It ignores linguistic decoration and focuses on what matters operationally.

Which is precisely why this should get the attention of anyone running a plant, a logistics operation, or a power-hungry facility along the Ohio River.

The technical twist is that V-JEPA does this with remarkable efficiency. It achieves competitive performance using roughly 1.6 to 2 billion parameters—compared to the hundreds of billions consumed by today’s chat-heavy models. Less compute. Less latency. Less electricity burned to produce clever prose no one asked for.

That efficiency is not an abstract virtue when you’re already watching data centers, substations, and transmission lines become the new industrial bottlenecks.

Why This Suddenly Matters Here

If this were just an academic squabble, it could be safely ignored. Academia produces one of those every Thursday. But V-JEPA points toward something more disruptive: AI that understands the physical world well enough to operate inside it.

That has consequences for robotics, automation, and industrial control—the very domains Beaver County knows best.

Think about inspection robots crawling through tight industrial spaces. Autonomous forklifts navigating cluttered warehouses. Maintenance systems predicting failure not because a sensor crossed a threshold, but because the pattern of movement no longer looks right. These are not language problems. They’re meaning problems.

Watch the companies building toward this future: Boston Dynamics, Tesla, and Figure. None of them are betting the farm on better small talk. They’re betting on machines that can see, predict, and act in messy environments.

Robots don’t need to explain themselves in complete sentences. They need to not knock over the shelving.

The Automation Clif

Proponents of this approach sketch a timeline that should make local workforce planners pay attention.

In 2026, autonomous agents—AI systems that can manage workflows and execute multi-step tasks—become commonplace. In 2027, embodied AI enters the physical world at scale. And by 2028, we may confront systems that reason in abstract “meaning space,” without leaning on language at all.

This doesn’t mean Beaver County wakes up unemployed one morning. It means the nature of automation changes. AI stops being a back-office typing assistant and starts becoming a shop-floor colleague—one that doesn’t get tired, doesn’t forget procedures, and never calls off sick.

The challenge, as always, will be integration: pairing human judgment with machine perception rather than pretending one can replace the other.

Energy, Efficiency, and an Old Paradox

There’s another reason this matters locally: power.

Language models are famously energy-hungry. They burn electricity the way blast furnaces once burned coke. V-JEPA-style systems, by contrast, are leaner—cutting inference operations by nearly threefold and reducing energy use dramatically in tasks like video analysis and realtime control.

That sounds like good news for a region already thinking hard about grid capacity, generation, and industrial demand.

But history offers a warning, courtesy of William Stanley Jevons. Jevons noticed that efficiency doesn’t always reduce consumption. It often increases it, by making a resource cheaper and more widely used. Better steam engines didn’t save coal. They burned more of it.

AI may follow the same script. Cheaper, more efficient intelligence could spread everywhere—from factory floors to edge devices—quietly increasing total demand even as per-task costs fall.

As Satya Nadella once put it, “Jevons paradox strikes again.” AI becomes a commodity, and like electricity itself, we find new ways to consume it simply because we can.

A Fork in the Road—And a Familiar One

So here we are, at a fork we didn’t realize we were approaching.

One path continues the race toward ever larger language models: more parameters, more data centers, more exquisitely worded nonsense. The other blends language with deep world-modeling—systems that understand not just what we say, but how reality behaves when no one is talking.

Beaver County has seen this movie before. We’ve watched industries that mistook paperwork for production, and others that quietly focused on process, physics, and fundamentals—and survived.

The irony is delicious. After decades of teaching machines to talk, the next real breakthrough may come from teaching them to stop talking and start paying attention.

If that’s true, the future of AI won’t be announced in a chatbot demo. It will hum quietly inside a plant, roll carefully across a concrete floor, and do something genuinely intelligent—without explaining itself at all.

Around here, that still counts as progress.